The Hidden Cost of Bad Labels

You spend hours fine-tuning your neural network architecture, only to discover your model performs poorly. Chances are, the issue isn't your code-it's your data. In the world of Machine Learning is a field of artificial intelligence focused on building systems that learn from data., even high-quality datasets contain mistakes. Research from MIT shows that ImageNet, one of the most famous datasets, has roughly 5.8% label errors. That might sound small, but Professor Aleksander Madry warns that these errors set a ceiling on how well your model can ever perform. No amount of complex coding can overcome bad ground truth.

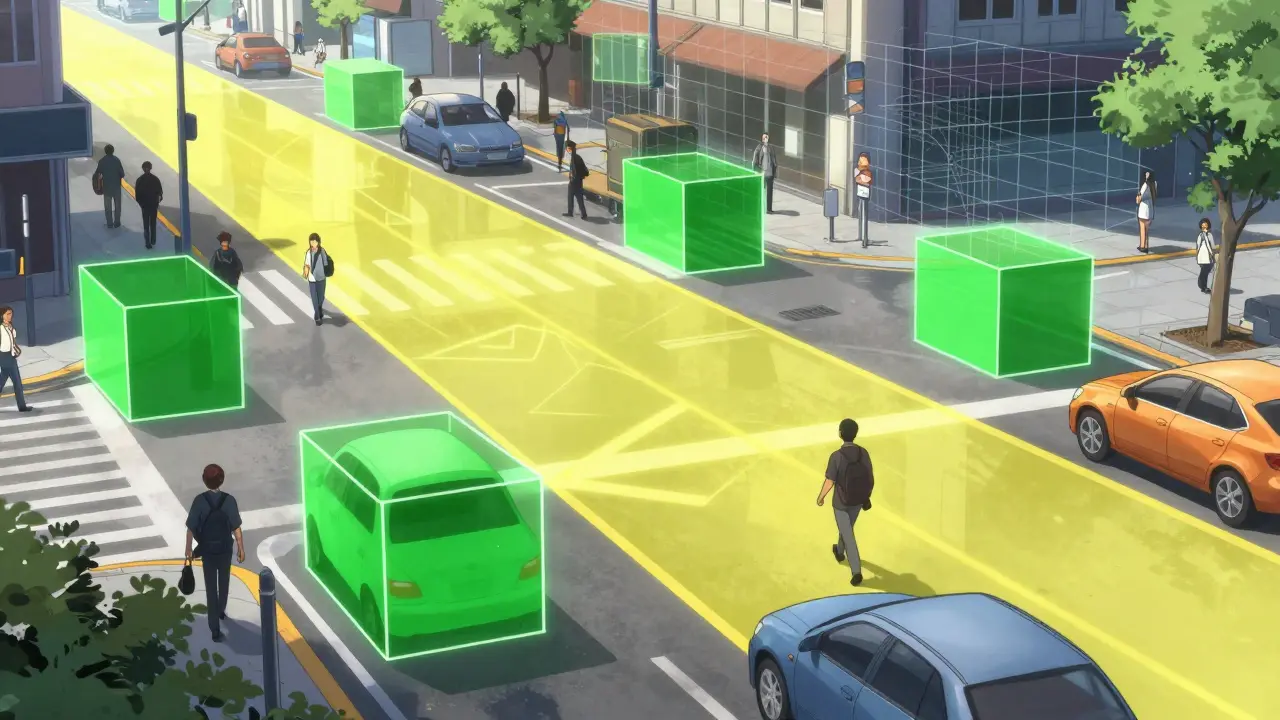

If you work with AI development, you know that data annotation is messy. A recent Encord report found computer vision datasets average 8.2% errors. You need to recognize these issues early. Ignoring them leads to "hallucinations" in production models. Whether you are building an autonomous vehicle system or a medical imaging tool, a single missed pedestrian or tumor in your training set could have real-world consequences.

Types of Labeling Mistakes You Must Know

To fix what you see, you first have to identify the pattern. Labeling errors aren't random; they cluster into specific categories. Understanding these helps you spot anomalies before training begins. Here are the main culprits you will encounter:

- Missing Labels: Objects or entities simply aren't marked. In object detection tasks, this accounts for about 32% of errors. For example, a safety dataset might miss a pedestrian standing behind a car, causing a self-driving algorithm to fail to detect them.

- Incorrect Fit: This happens when a bounding box is too loose or tight. Roughly 27% of visual labeling mistakes fall here. If a box includes the background instead of just the object, the model learns the wrong features.

- Ambiguous Examples: Sometimes, a piece of data fits two classes equally well. About 10% of errors stem from this confusion. Without clear guidelines, annotators make inconsistent choices, creating noise in the dataset.

- Misclassified Entity Types: In text recognition, a specific noun might be tagged as a location instead of an organization. This represents a significant portion of errors in Named Entity Recognition tasks.

These errors often creep in because annotation instructions change during a project. Known as "midstream tag additions," this happens in 21% of projects where version control isn't maintained. Clearer guidelines reduce mistakes by nearly half, according to TEKLYNX analysis.

Detecting Errors with Automated Tools

Manually reviewing every single image or text sample is impossible for large datasets. You need technology to flag suspicious points. The field has moved beyond simple human review into algorithmic validation. Several tools have emerged as industry standards.

| Tool Name | Best Use Case | Key Strength |

|---|---|---|

| Cleanlab An open-source framework for finding label errors using confident learning | General Classification | Statistical rigor, high precision |

| Argilla A human-centric platform for managing machine learning workflows | Text Generation/NLP | User-friendly interface, Hugging Face integration |

| Label Studio A universal data labeling platform | Consensus Workflows | Supports multi-annotator comparison |

| Encord Active Computer vision optimization and error detection | Object Detection | Specialized visualization for vision data |

Cleanlab uses a method called "confident learning." Instead of guessing, it estimates the noise distribution of your labels. Its research indicates it can catch up to 92% of errors depending on the dataset. While powerful, it does require some coding skills. If you are non-technical, tools like Argilla offer a visual dashboard where you can inspect flagged samples.

Another effective strategy is multi-annotator consensus. One person labeling a picture is prone to fatigue. Studies show having three annotators review each sample reduces error rates by 63%. However, this increases costs significantly-by about 200%. Many teams find a middle ground: use an automated tool to flag likely errors, then send those flags to humans for a final look.

Requesting Corrections Efficiently

Finding the error is step one. Getting it fixed is step two. This process requires communication with your data annotation team or vendors. When you identify a batch of suspect labels, don't just point and say "this is wrong." Explain the context.

- Define the Issue Clearly: Specify if it is a missing label or a boundary error. Vague requests lead to vague fixes.

- Provide Examples: Show a few instances where the current label seems off. Visual evidence speeds up understanding.

- Update Guidelines: If the error is due to ambiguity, update your documentation. Midstream changes cause 21% of tagging errors.

- Track Changes: Keep an audit trail of what was corrected. This helps if the same error type appears later.

Using platforms like Datasaur can streamline this. Their error detection feature highlights inconsistencies automatically. In one case study, a team identified 47 inconsistencies in a medical text dataset with one click. However, remember that automated suggestions aren't perfect. Domain experts still need to validate the fix. Dr. Rachel Thomas warns that relying too much on algorithms can create bias, especially against minority classes.

Building a Quality Assurance Workflow

You shouldn't wait until the end of a project to check labels. Integrate error detection into your MLOps pipeline. According to Argilla's documentation, a standard workflow involves four main stages:

- Load Dataset: Prepare your raw data with potential annotations.

- Train Initial Model: Generate predictions to compare against ground truth.

- Run Detection Algorithm: Use tools like Cleanlab to score confidence.

- Human Review: Have specialists correct the flagged items via a web interface.

This cycle typically takes days, not weeks. A company using Encord Active reduced their error rate from 12.7% to 2.3% after implementing this flow, though it required significant person-hours. As of late 2025, regulatory bodies like the FDA are tightening rules on AI software validation. They expect rigorous validation of training data quality. Implementing these checks now avoids compliance headaches later.

Frequently Asked Questions

What exactly is a labeling error?

A labeling error occurs when the assigned class or annotation does not accurately represent the content of the data point. This includes missing objects, wrong bounding boxes, or incorrect text classifications. Even small error rates, like 5%, can severely degrade model accuracy.

Can I fix labeling errors manually?

Yes, manual review works for small datasets. However, for larger collections, using automated tools like Cleanlab or Argilla to flag potential errors is much faster. Manual review alone risks human fatigue and inconsistency.

How do I ask my annotation team to fix errors?

Be specific. Send them a list of IDs flagged as erroneous, include visual examples, and reference the exact guideline rule they violated. Using a structured workflow rather than ad-hoc emails ensures better tracking.

Does correcting errors improve model performance?

Absolutely. Research from MIT indicates that correcting just 5% of label errors in a standard dataset like CIFAR-10 can increase test accuracy by 1.8%. It directly lifts the performance ceiling.

Are there free tools for detecting errors?

Yes, Cleanlab offers an open-source core framework that is widely used in the community. Other platforms like Argilla may have free tiers for smaller teams. Paid enterprise versions usually add features like advanced analytics and cloud storage.